Trust the balance process: Data and insights

Hi I’m Brian Chang, an analyst on VALORANT’s Insights team, here with Insights Researcher Coleman “Altombre” Palm. We’re here to talk to you about our approach to using data to help keep our game balanced and enjoyable for you.

In this article, we’ll first cover our general philosophy behind how we use data to help inform changes to our game. We’ll then walk you through an example that shows how past data was used to inform changes to an Agent.

DATA IN GAME BALANCE PHILOSOPHY

On the balance team, our primary goal is to make sure that the game is a fair experience for every player. You can read David “MILKCOW” Cole’s insightful post on game balance in VALORANT here.

One of the major roles that data plays during the game balance process is to help inform designers about the state of the game. Data can tell us when your experience appears to be in a non-ideal state.

Data in this case is used as a diagnostic tool to tell us which parts of our game might need tuning. This data could come in the form of in-game telemetry (e.g. a particular Agent’s winrate is too high/low), or it could come in the form of player research (e.g. a majority of players view an Agent to be frustrating to play against).

However, we go out of our way to not be completely data-driven when making balance decisions in the game, especially early on. VALORANT is still relatively fresh in your hands, and we ourselves don’t yet fully know what “balanced” looks like in our game.

For example, we don’t know how far away from 50/50 attacker/defender-favored a map has to be before player experience becomes unfun. Furthermore, there’s complexity in the fact that Agent strength and popularity affects map balance. A Sentinel-heavy meta would likely lead to more defender-sided maps, whereas a Duelist-heavy meta would likely make maps seem more attacker-favored (things get even messier if you think about how Agents affect maps on a map-by-map basis). Data won’t be the “be-all and end-all” of game balance, but rather one of the many tools we can use to understand the state of the game.

That being said, we’re constantly monitoring both our in-game data as well as your direct input via surveys, so that we can refine our understanding of how data correlates to in-game states of balance. Our aspiration is that in the future, this refined understanding will help us more rapidly understand what aspects of the game seem off, and how we can adjust.

WHAT WE’RE TRACKING

Today, we have a number of data points we look at for each part of our game in order to assess the strength/health of various parts of our game. Split by system, here are some of the metrics we track:

- Agents: Winrate (by rank, map), presence (how often an agent is picked), mastery curves (how many games of an agent it takes for a player to hit the “true” winrate of that agent), breadth vs. depth (broad popularity of an agent vs. how deeply each player engages with an agent)

- Maps: Round winrate by side (by rank), average round outcome by map (if/where spike was planted)

- Weapons: Weapon popularity by round, weapon matchups (e.g. how well a Vandal user does against a Phantom user in a duel)

This isn’t to say that we use only the above metrics to assess the health of our game, but hopefully the examples give you a picture of some of the things we track.

Agent Balance: A Sage case study

For the rest of this post, we’ll focus on Sage, an Agent who has been on the nerfing block quite a few times since our June launch. What led to us making continued changes to Sage, and how did those changes affect her strength?

WHICH AGENT IS TOO STRONG?

The first thing we need to establish is what metrics we use to assess Agent strength. In League of Legends, the primary metrics that are used are presence (how often a champion is picked) and winrate (% of games won per champion). This gets split into a number of different skill brackets, ranging from the average player all the way to the pro level.

Immediately, we observed a major issue when trying to apply League’s metrics for balance in VALORANT. Our game has mirror matches, so an Agent that is very strong (or generally picked in almost all games) could appear to have a 50% winrate (since every time that Agent wins, they also lose).

To resolve this issue, we decided to look at an Agent’s non-mirror winrate (that is, an Agent’s winrate when going against a team without that Agent on the enemy team). This helps us remove the “guaranteed win AND loss” games where the same Agent is on either team. There are some potential risks to this (some amount of selection bias is introduced, which we won’t get into now), but we determined that this non-mirror winrate was our best proxy to understand an Agent’s power from an in-game data perspective.

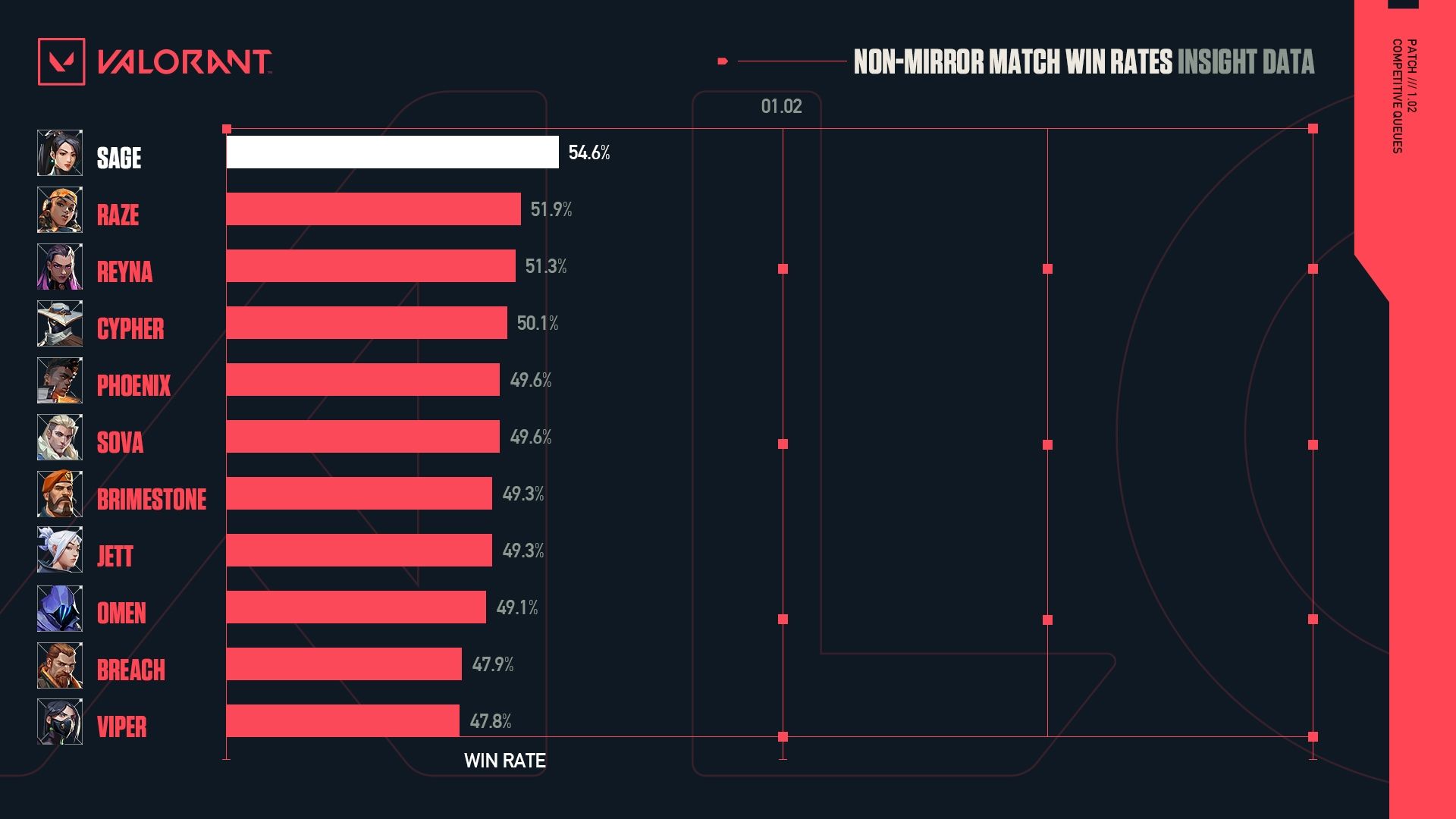

Here’s what non-mirror winrates looked like in the first patch with Competitive queue live (in patch 1.02). Keep in mind that Sage was nerfed twice before this patch, in Closed Beta patch 0.50 as well as launch patch 1.00.

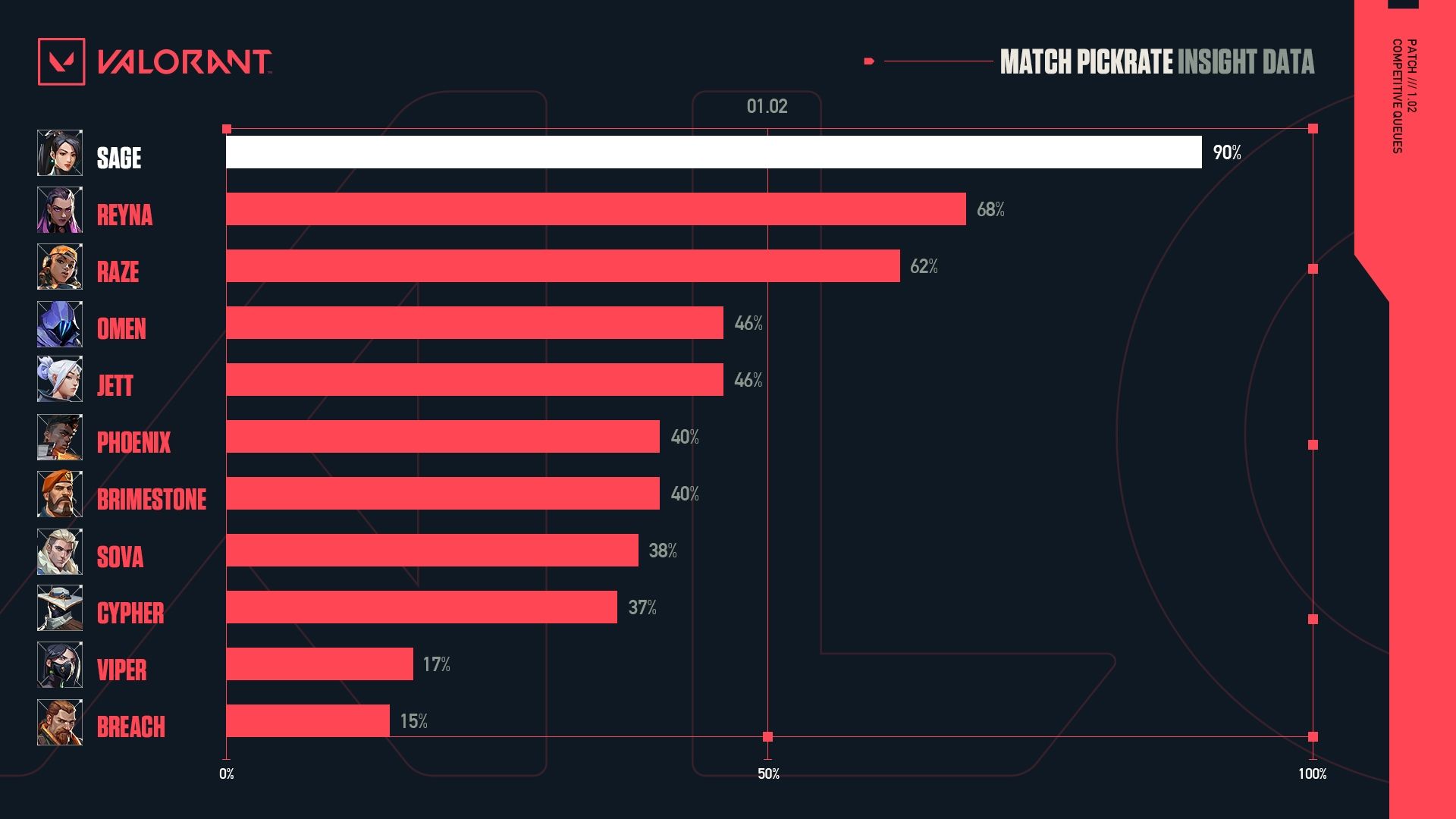

During that same time, here’s what presence looked like for Agents in competitive queues. Some amount of this is related to the fact Sage was one of five free agents when a player joins VALORANT, but her presence was still consistently very high.

WHY IS AN AGENT STRONG?

It was clear from the data that Sage was in a tier of her own. The winrate numbers Sage was putting up merited further discussion about what we could do to tone her down.

So far, the data had told us which Agent was too strong. But we wanted to better understand why she was so strong. There are a number of data points we could look at to understand why:

- Direct feedback from you about why you believe Sage is strong, both in surveys and in direct conversations

- Breakouts of Agent strength by side (to understand how much of Sage’s strengths are pronounced on attack/defense)

- Breakout of Agent strength/presence by rank (to understand if there are discrepancies across skill brackets)

- Ability-specific data (average amount of healing Sage does in a round, round winrate when Sage uses her ultimate, compared to when other agents use their ultimate, etc.)

All of these data points are intended to give more context on what components of Sage are strong and potentially overtuned. The tricky part is to make sure that we nerf an Agent without compromising their identity as a part of VALORANT. If we nerf the strongest part of every Agent, we risk losing what makes them special. Equipped with this data, our designers explored options to make Sage not a “must-pick”, while keeping her identity as a premium stall/support utility character.

It’s important to keep in mind that data is but one of the tools that can be used to assess Agent strength. A huge part of understanding and refining an Agent’s kit is more related to the vast amount of knowledge and experience that the designers bring to the table. Our design philosophy is ultimately what drives our game forward, and no amount of data is useful unless we keep our core design principles at the forefront of game balance.

Ultimately, the direction that the balance team ended up going is to hit both her healing and stalling, but in different ways. Healing potential was cut across the board (longer cooldown, less healing per cast), while stalling was changed to allow for more counterplay (Barrier Orb took some time to fortify, Slow Orb size reduction).

WHAT THINGS LOOK LIKE TODAY

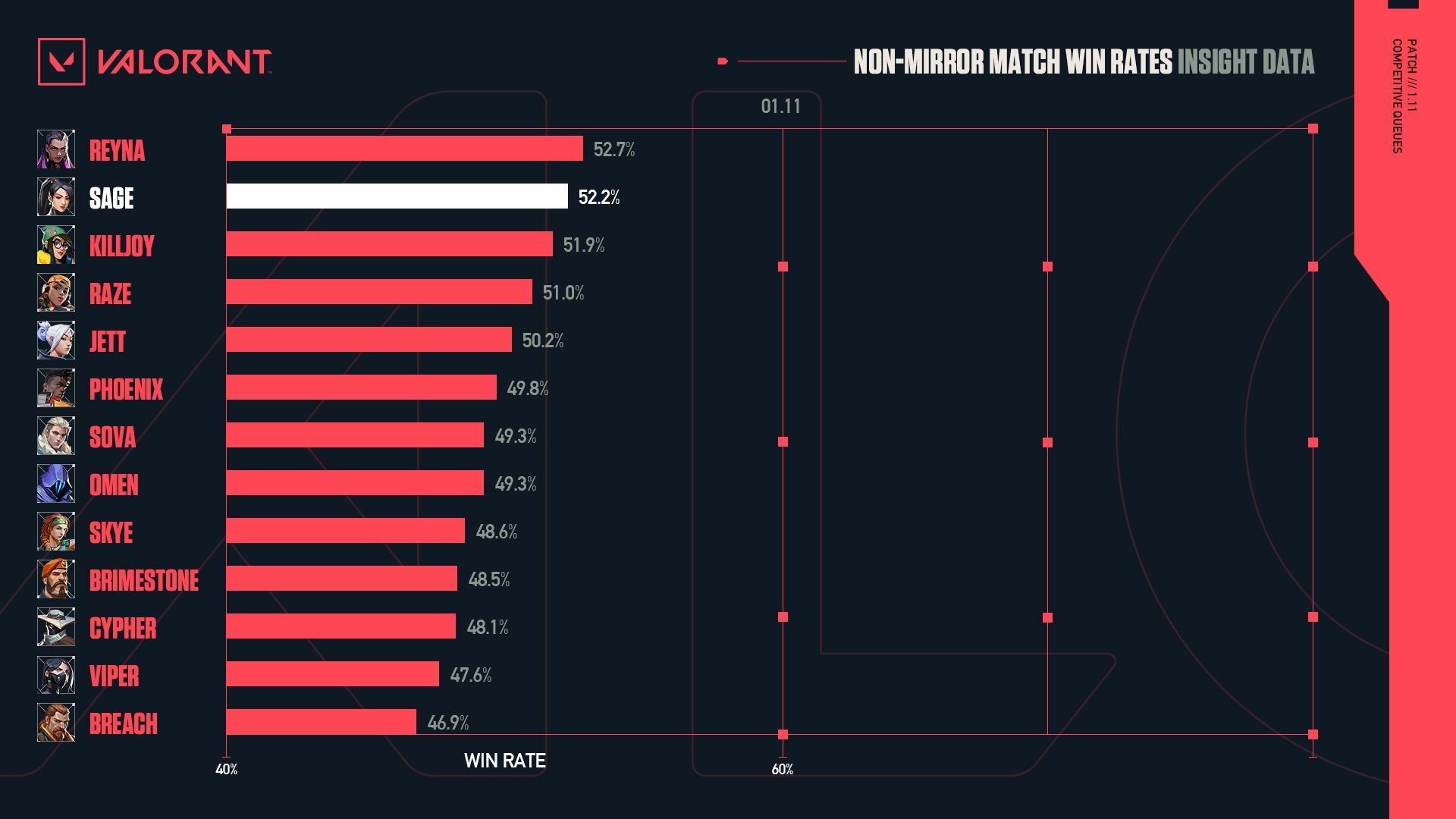

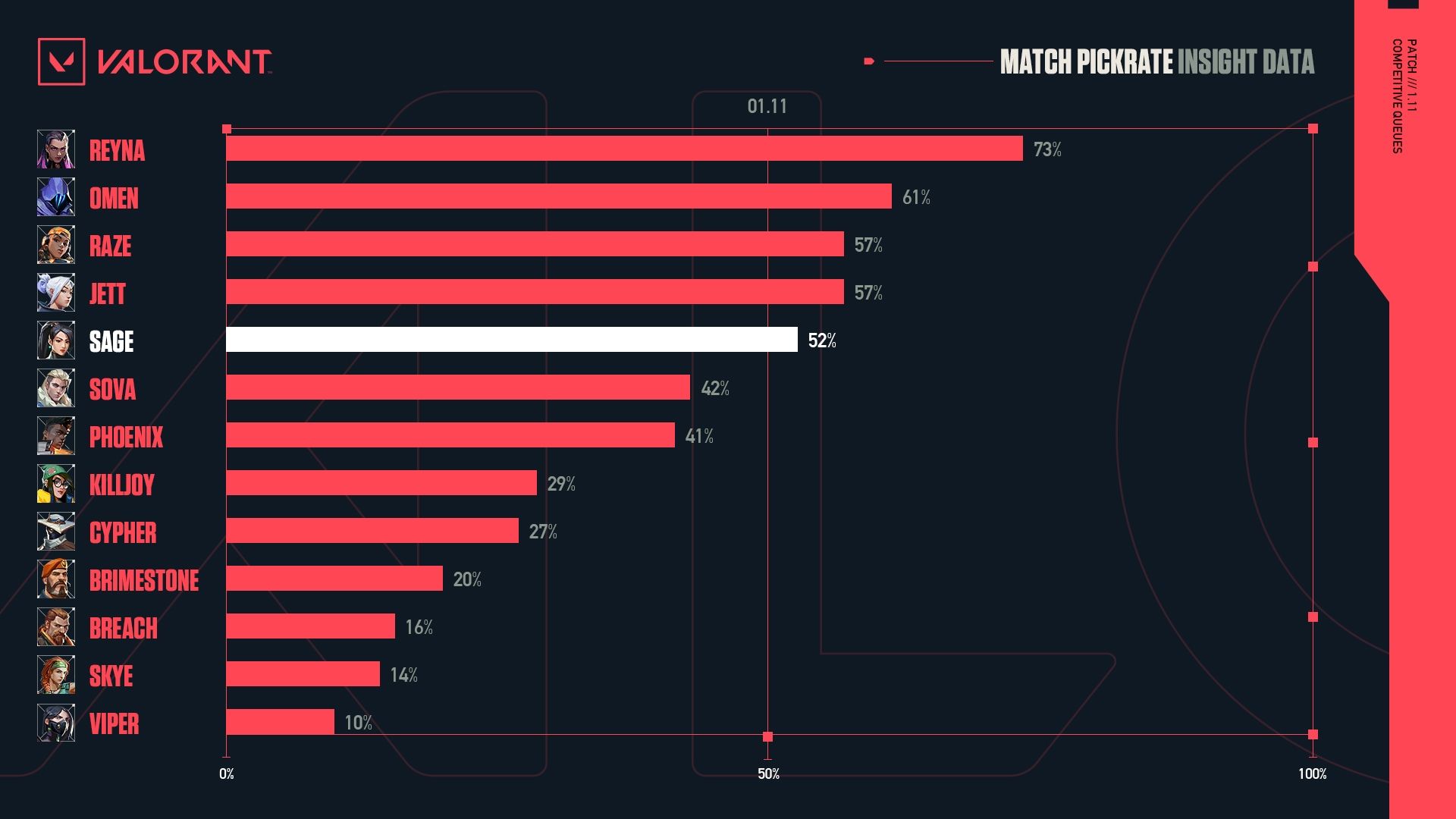

So after we made those changes, what do things look like? Here’s what Sage’s numbers looked like in patch 1.11 competitive queues:

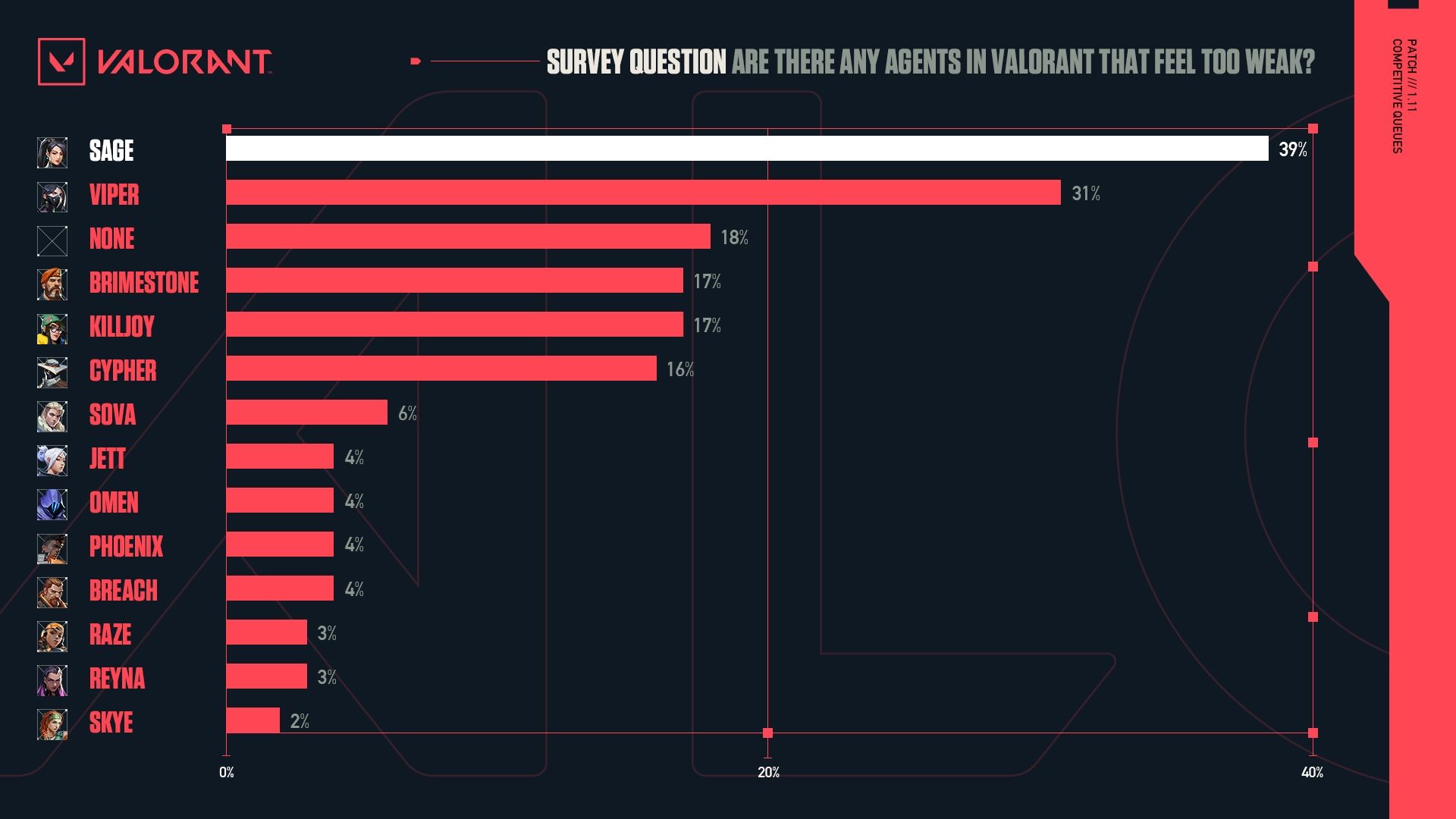

Sage is still quite strong; in fact, she has never fallen outside of the top three strongest at any point, in any skill bracket, since launch. Her presence is in a more reasonable state as well (hovering around 50%). However, public perception is that Sage was nerfed too hard; 38% surveyed believe that Sage is currently too weak in patch 1.11.

MOVING AHEAD

Currently, we feel like Sage is in a relatively healthy state, according to our data. There are, however, a number of factors that we’ll need to refine our understanding on moving forward:

- Release of Agents, maps, and how those affect Agent strength: With the release of Icebox and (more importantly) Skye, we may see a shift in the meta such that Sage becomes too weak. Hypothetically, the introduction of another Agent that can heal allies might provide choice competition that renders Sage more obsolete (for the record, we don’t think this will happen, but it’s possible).

- Pro-play data: One thing that we’re still refining in our understanding of the pro-play meta, and how data from the highest level of VALORANT should affect our balance philosophy. If we compare the data from the professional matches happening around the world to Radiant-level matches, there’s still a huge discrepancy in both Agent presence and non-mirror winrates.

- Public perception: Despite our in-game telemetry telling us that Sage is in a good spot balance-wise, survey results tell us that players feel like Sage feels too weak. We’ll have to track how perception changes (if at all) over time, and assess what steps need to be taken should perception remain dubious about Sage’s strength.

If you made it this far, thanks for sticking with us through this small example of how data is used over on the VALORANT balance team. We hope this gave you some visibility into how and why decisions are made (and hopefully can convince you that Sage isn’t completely useless…).